When to fine-tune vs prompt-engineer

When to fine-tune vs prompt-engineer

In 2026, prompt engineering plus few-shot examples plus RAG handles roughly 95% of LLM use cases. Fine-tuning is rarely the right first answer. Reach for it only when prompting has hit a measurable ceiling on reliability, latency, cost, or compliance, and even then there are usually three cheaper interventions to try first.

This post gives you the decision tree. We will cover the five conditions that genuinely justify a fine-tune, the cost math founders actually face (a $5,000 Anthropic fine-tune versus $50 per month of prompted Sonnet for the same job), the modern alternatives most teams skip, and how to run the call in ten minutes without writing a training script.

The 2026 default is prompt, few-shot, RAG

Three things changed since 2023 that pushed fine-tuning down the priority list.

Frontier models got smart enough that the floor on most enterprise tasks is already 85% accuracy with a decent prompt. Sonnet 4.5, GPT-5, and Gemini 2.5 Pro will classify, summarize, extract, and reason at a level that used to require a custom-trained model. For most product features, the question is no longer "can the model do it" but "can the model do it consistently."

Prompt caching changed the cost math. Anthropic, OpenAI, and Google now cache stable system prompts at 10% to 40% of base token cost. If you have a 4,000-token system prompt with a rubric, examples, and tool definitions, you used to pay full price per request. Now you pay it once per cache window. That alone removes the cost argument for most fine-tunes under 100,000 daily requests.

RAG and tool use cover the other two reasons people used to fine-tune. Domain knowledge that changes weekly belongs in a retrieval index, not in model weights. Structured output belongs in a tool-call schema or a Pydantic-validated function call, not in trained behavior. We have a longer take in building production RAG: 2026 architecture on the retrieval side.

The practical rule: if you have not tried a clean prompt with three to five few-shot examples, a tool-call schema for structure, and RAG for any domain knowledge, you are not ready to think about fine-tuning yet.

Five conditions that genuinely justify fine-tuning

When you have done the work above and still hit a ceiling, fine-tuning earns a look. Here are the five real triggers.

1. Structured-output reliability at scale

You need 99.9% schema-valid output across millions of requests. Prompted models hit 99% with good schemas; the last nine-nines are where fine-tuning pulls ahead. A real example: a healthcare claims processor running 200,000 daily extractions where one malformed field per 1,000 means a human reviewer gets pulled in 200 times a day. Fine-tuning a smaller model on 5,000 verified examples drops that to a handful per week.

2. A narrow domain frontier models still hallucinate on

Niche legal sub-domains, scientific naming conventions, internal company taxonomies, regulated medical coding. A fine-tuned Qwen 7B hit 88% accuracy on power-outage classification while prompted Claude managed 31% on the same labels. That gap is real and it does not close with better prompts. The signal: you have tried three rounds of prompt iteration and the failure rate is stuck above 10% on a fixed-schema task.

3. Latency-critical apps that need a smaller model

Voice agents, real-time copilots, and on-device features need sub-300ms first-token latency. Frontier models cannot hit that consistently. The pattern is to fine-tune a 3B or 7B open-source model to mimic a frontier model's behavior on your specific task (this is distillation in practice). You trade some quality for a 5x to 10x latency cut.

4. Inference cost above $10k per month

This is the cleanest economic signal. If you are spending $10,000 a month or more on a single repetitive task (classification, extraction, simple generation), the math starts to favor a fine-tune. The same Qwen 7B example: $789 per million classifications fine-tuned versus $11,485 per million prompted on Claude. At one million classifications per month, that is $128,000 saved per year against a $5,000 to $15,000 training cost. Below $10,000 per month of inference, you almost never recoup the engineering time.

5. IP, security, on-prem requirements

Defense contractors, regulated finance, and some healthcare buyers will not accept "data goes to Anthropic." Fine-tuning a self-hosted Llama or Mistral derivative is the only path. Cost is no longer the question; compliance is. This is a small slice of the market but it is real.

If your situation does not match one of those five, fine-tuning is almost certainly the wrong move.

The cost math founders actually face

Here is the conversation we have with founders weekly. They have a feature that uses Sonnet, costs $50 a month in API spend, and works "okay but not great." Should they fine-tune?

| Approach | Setup cost | Per-request cost | When it wins |

|---|---|---|---|

| Prompt + few-shot | $0 | Standard API | Up to ~50k daily requests, varied tasks |

| Prompt caching | $0 | 10-40% of standard | Stable system prompt, repeated context |

| RAG | $2-10k | API + vector DB | Domain knowledge changes weekly |

| DPO / preference tuning | $500-3k | Standard API | Style or tone consistency at scale |

| PEFT / LoRA | $1-5k | Self-hosted GPU | Narrow domain, on-prem, latency |

| Full fine-tune | $5-50k | Smaller model API or self-hosted | 100k+ daily requests, fixed schema |

A typical Anthropic-managed fine-tune on Sonnet runs $3,000 to $10,000 for a usable training run, plus engineer time to curate 1,000 to 5,000 high-quality labeled examples (call that another $5,000 to $15,000 of effort). Compare to $50 per month of prompted Sonnet, which is $600 a year. The fine-tune has to either eliminate that $600 entirely (it does not, you still pay inference) or unlock a quality jump worth $14,000+ in product value.

For most $50-per-month workloads, the answer is no. For workloads at $5,000+ per month, run the numbers carefully. Above $10,000 per month, fine-tuning is on the table.

Modern alternatives most teams skip

Three options that sit between "better prompt" and "full fine-tune," and almost no one reaches for them in the right order.

Claude prompt caching. If your system prompt is over 1,000 tokens and stable, caching cuts your per-request cost 60% to 90%. This is a one-line change in the API call. It is the first thing to try when cost becomes the issue, and it removes the cost case for fine-tuning entirely on most workloads under 100k daily requests. We have a deeper guide in how to choose between OpenAI, Anthropic, Google models covering when each provider's caching wins.

OpenAI DPO (Direct Preference Optimization). When the issue is style, tone, or "the model picks the wrong option among valid ones," DPO trains on preference pairs (here is a good answer, here is a worse answer) instead of supervised labels. Setup is $500 to $3,000 and the data is easier to collect: pull pairs from your existing logs where you flagged one as better. Use this when prompting cannot enforce a stylistic preference reliably.

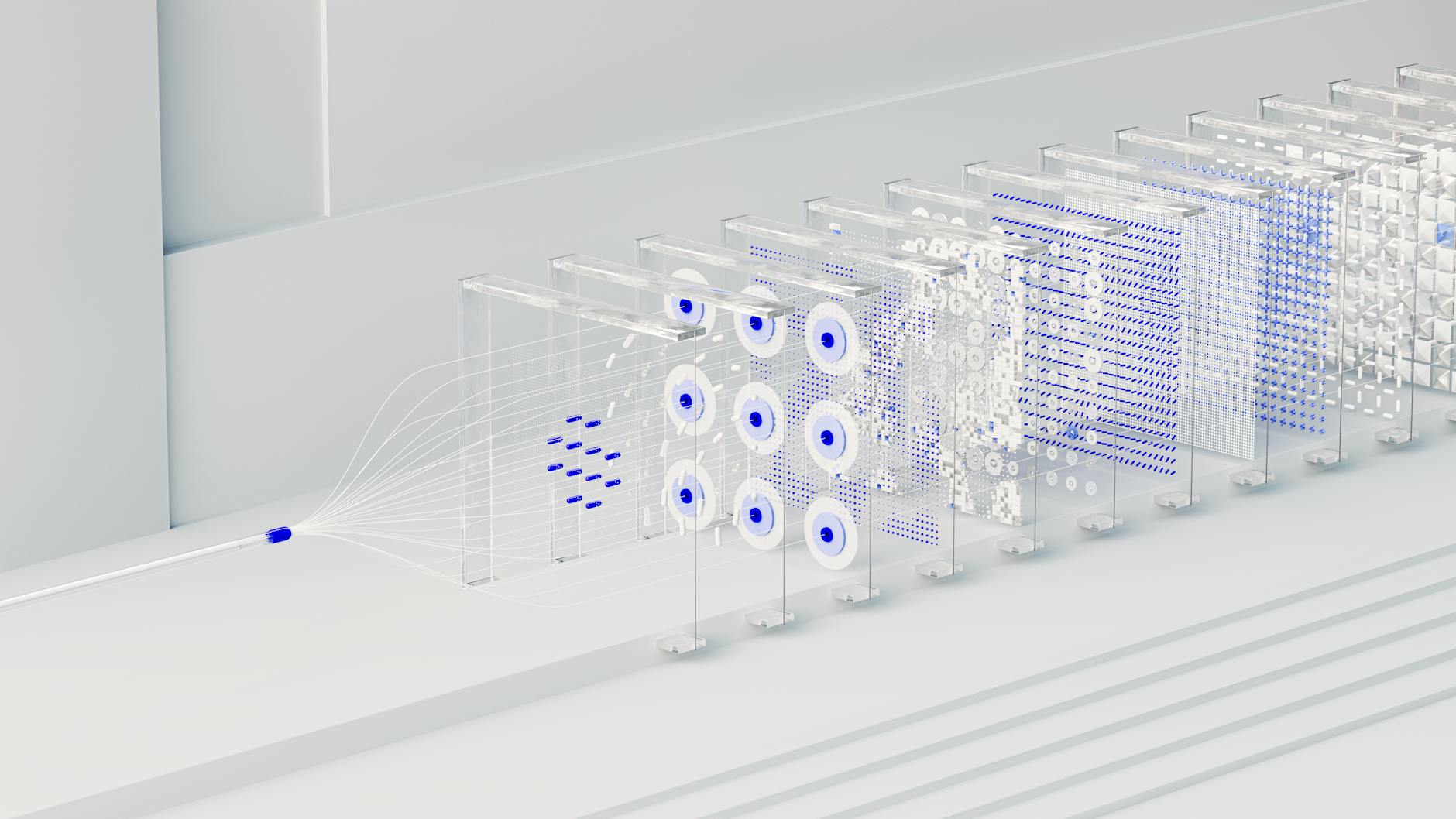

Model distillation. You log thousands of frontier-model responses on real production traffic, then fine-tune a smaller open-source model to imitate them. The small model serves at 5x to 20x lower cost and 3x to 10x lower latency. This is how Anthropic, OpenAI, and Google build their own Haiku, Mini, and Flash variants. You can do it yourself with Together AI, Modal, or a self-hosted Unsloth setup.

PEFT and LoRA. Parameter-efficient fine-tuning trains a small adapter (a few million parameters) on top of a frozen base model. Cost is $1,000 to $5,000, and you can run a 17B-parameter LoRA training in 15GB of VRAM with Unsloth (a single consumer GPU). This is the right fit for narrow domain work where you need a behavior change but not a wholesale model rewrite.

The order to try them in: prompt + few-shot, then caching, then RAG, then DPO, then LoRA, then full fine-tune. Most teams stop at step three.

A decision tree you can run in 10 minutes

Walk through these six questions in order. Stop at the first "yes."

- Have you tried a clean prompt with 3 to 5 few-shot examples and a tool-call schema for structure? No → write that first. You are not ready.

- Are you spending less than $1,000 per month on the task? Yes → stay prompted. Fine-tuning will not pay back.

- Does your accuracy ceiling sit above 95% with prompting? Yes → ship it. Polish with caching.

- Is the failure pattern about knowledge, not behavior (the model does not know your data)? Yes → RAG, not fine-tuning.

- Is the failure pattern about style or preference (the model picks the wrong option among valid ones)? Yes → DPO, not full fine-tune.

- Are you above $10,000 per month inference, or do you need sub-300ms latency, or do you have on-prem compliance requirements? Yes → fine-tune (LoRA first, full SFT only if LoRA fails).

If you got to question 6 and answered yes, you have a real fine-tuning case. If you got there and answered no, the right move is to keep prompting and revisit in three months.

What this means for hiring

The technical skill of running a fine-tune is no longer the bottleneck. Anthropic, OpenAI, Google, Together, Modal, and Hugging Face all expose managed fine-tuning that takes a JSONL file and returns a trained model. A senior engineer can ship one in two days.

The bottleneck is judgment: knowing when to stop prompting. We see this constantly. A team spends six weeks fine-tuning a model and ships a 2% accuracy gain on a workload that costs them $200 a month. The right answer was prompt caching plus a tool schema, three hours of work.

That judgment is what AI-native engineering actually means in 2026. It shows up as: trying the cheap interventions first, measuring before training, knowing the modern alternatives, and being willing to ship a 92%-accurate prompt instead of chasing 96% with a model rewrite. The deeper take is in what we mean by 'AI-native engineer' and what changes when you write code with AI.

Every engineer on Cadence is AI-native by default. The voice interview screens specifically for this kind of judgment: Cursor and Claude Code fluency, prompt-as-spec discipline, and the instinct to reach for caching, RAG, or DPO before reaching for a training run. There is no non-AI-native option on the platform. Across our 12,800-engineer pool, the median time to first commit on AI-heavy projects is 27 hours, because the engineers do not waste two weeks rebuilding what a smarter prompt would have delivered.

If you are weighing a build-versus-train decision on a real feature, the Decide tool gives you a Build, Buy, or Book recommendation in two minutes based on the same framework above.

What to do this week

Pick the LLM feature that frustrates you most. Run the six-question tree above. If you stop at question 1 or 2, you have your answer (write the prompt, ship it). If you stop at 3 or 4, you have a clear scope (caching or RAG). If you stop at 5 or 6, you have a real fine-tuning candidate, and the cost math is on your side.

If the engineer who would do the work is not yet on your team, this is exactly the kind of bounded scope (one to four weeks, clear deliverable) that fits the Cadence model. Book a senior engineer for the week, hand them the failing prompt and the metrics, get a written recommendation back. The 48-hour trial covers you if the fit is wrong.

If you are stuck on a build-versus-train call right now, run the Decide tool for a free recommendation, or book a senior AI engineer for one week on Cadence to scope the work end to end. Weekly billing, replace any week, no notice period.

FAQ

Is prompt engineering still relevant in 2026?

Yes. Frontier models reward better prompts as much as ever, and prompt caching makes long, structured prompts affordable. It is the first lever for almost every LLM problem and the last one most teams should give up on.

How much does it cost to fine-tune Claude or GPT?

An Anthropic Sonnet fine-tune typically lands at $3,000 to $10,000 for a usable run, plus engineer time to curate the dataset. OpenAI DPO and SFT runs are $500 to $5,000 depending on data volume. LoRA on open-source models is under $500 with consumer GPUs and Unsloth.

When is RAG better than fine-tuning?

RAG wins when knowledge changes often (docs, prices, policies, internal data) and when citations matter to the user. Fine-tuning wins when you need a behavior change, not a knowledge change. If your reflex is "the model does not know X," reach for RAG. If your reflex is "the model does not act like X," consider fine-tuning.

Can a small startup fine-tune a model?

Technically yes, the tooling is mature. Practically, almost no startup needs to before $10,000 per month of inference spend on a single task. The judgment to know when to stop prompting and start training is the real skill, and it usually argues for staying prompted.

What about distillation versus fine-tuning?

Distillation is a kind of fine-tuning where the training data comes from a larger model's outputs. It is the right move when you need lower latency or cost on a task that prompted Claude or GPT already does well. Use it after you have validated the task on the frontier model, not before.